35件のポスト(リプライ除く)を7カテゴリに分類しました。

| カテゴリ | 件数 | 割合 |

|---|---|---|

| 🏗️ 3D再構成・SLAM | 11 | ██████████ 31% |

| 🚗 自動運転 | 3 | ███ 9% |

| 🤖 ロボティクス | 1 | █ 3% |

| 🧠 VLA・Foundation Model | 3 | ███ 9% |

| 📄 論文紹介 | 2 | ██ 6% |

| 🔧 OSS・ツール | 4 | ████ 11% |

| 💬 その他 | 11 | ██████████ 31% |

🏆 人気トップ3

🥇 1位

| RT | 76 |

| Like | 484 |

| Views | 21000 |

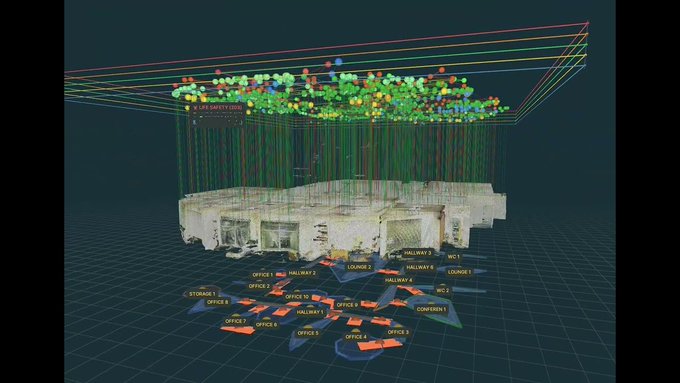

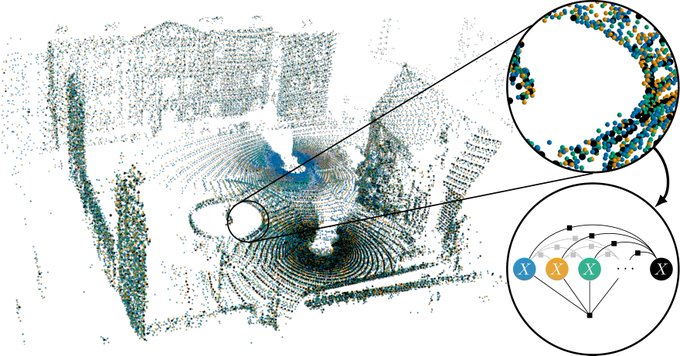

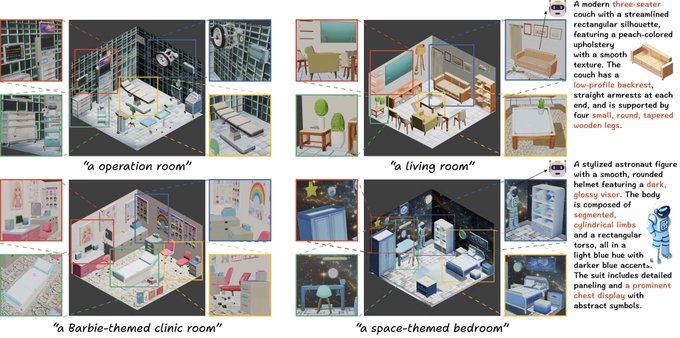

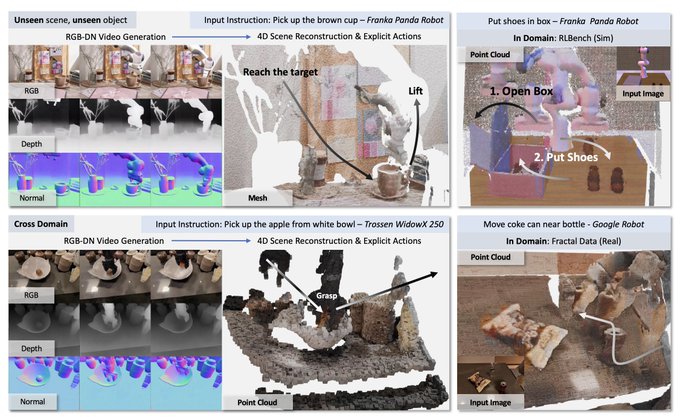

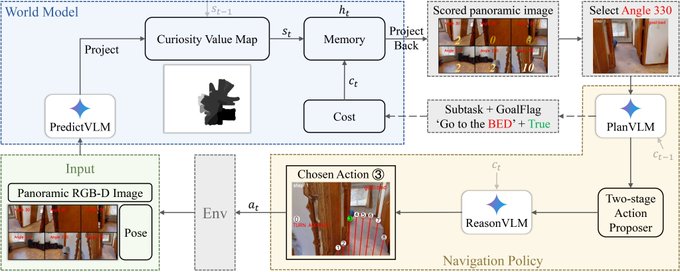

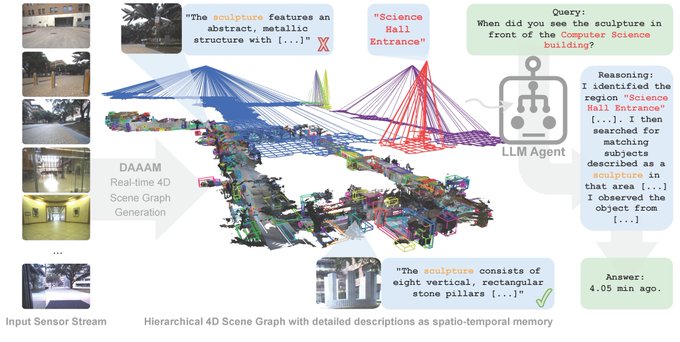

Describe Anything, Anywhere, at Any Moment DAAAM builds a hierarchical 4D scene graph as spatio-temporal memory, enabling embodied agents to describe anything, anywhere, at any moment.

🔗 ポストを見る

🥈 2位

| RT | 31 |

| Like | 240 |

| Views | 14000 |

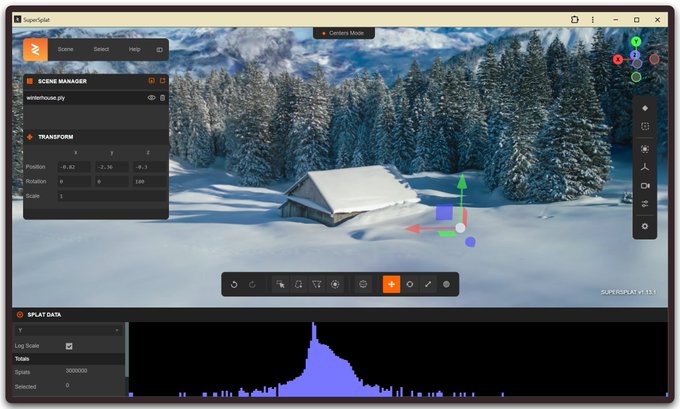

supersplat 3D Gaussian Splat Editor The SuperSplat Editor is a free and open source tool for inspecting, editing, optimizing and publishing 3D Gaussian Splats. It is built on web technologies and runs in the browser, so there’s nothing to download or

🔗 ポストを見る

🥉 3位

| RT | 31 |

| Like | 214 |

| Views | 10000 |

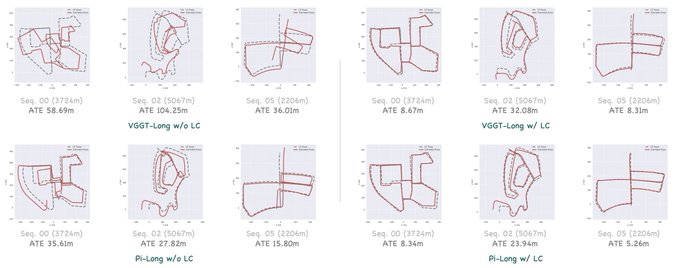

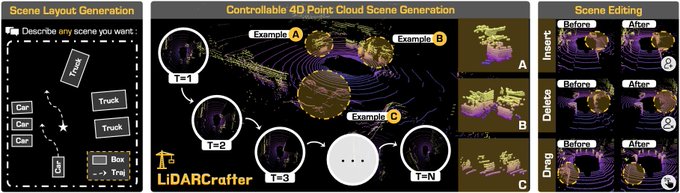

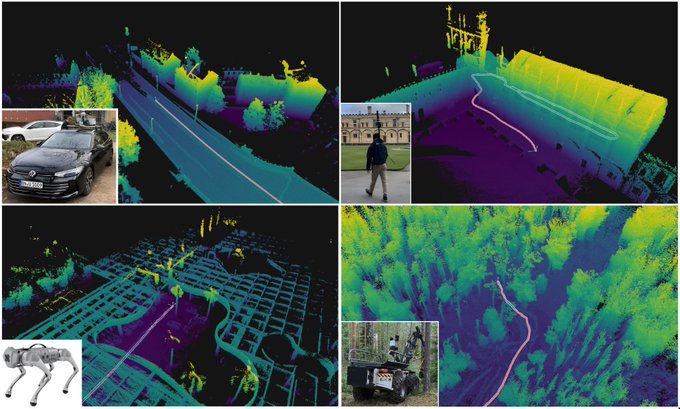

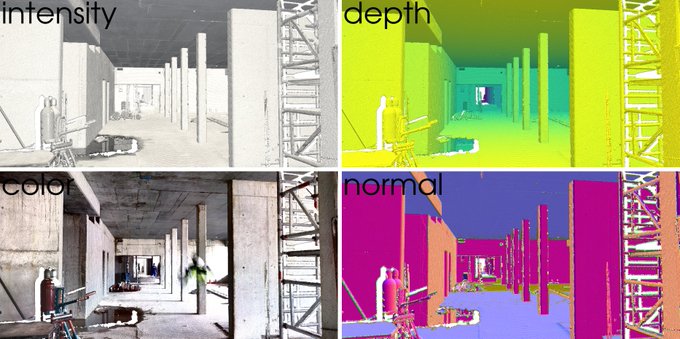

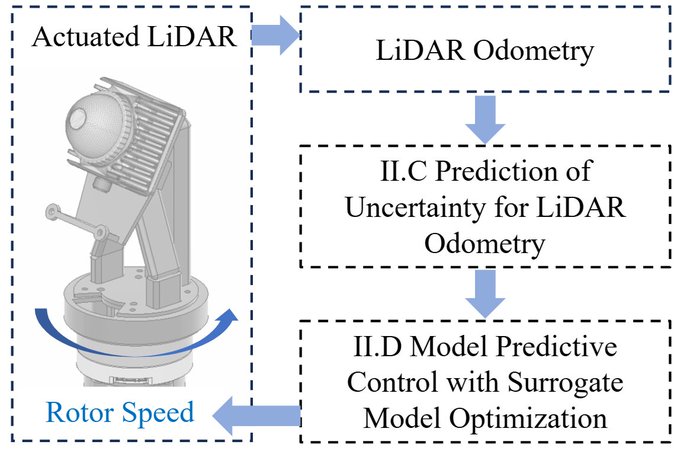

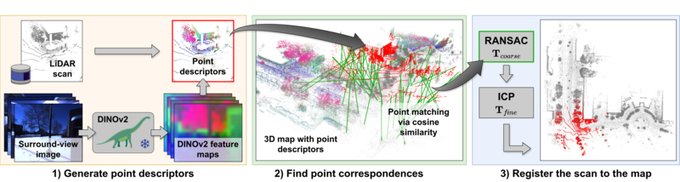

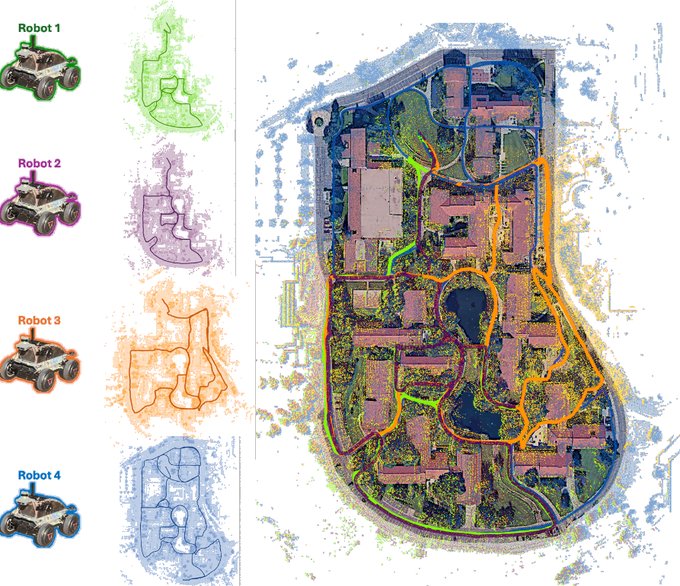

SiMpLE A simple LiDAR odometry method reducing the complexity and configuration burden of localization algorithms.

🔗 ポストを見る

📂 カテゴリ別ハイライト

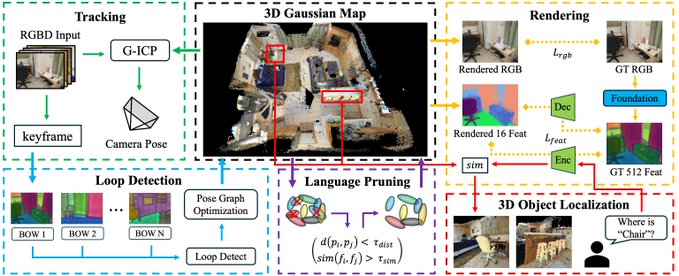

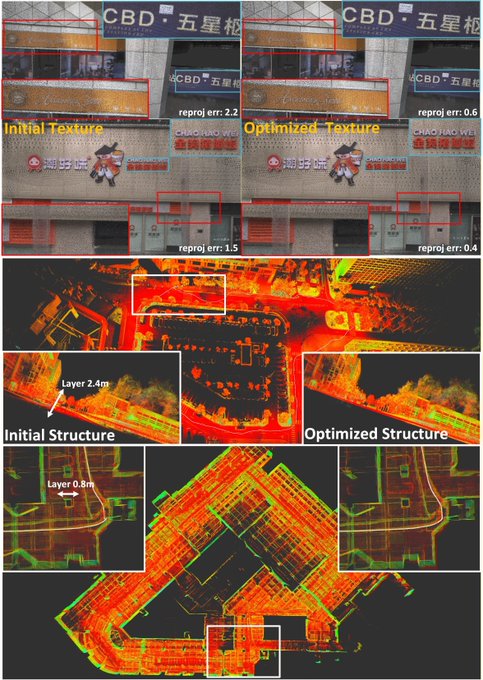

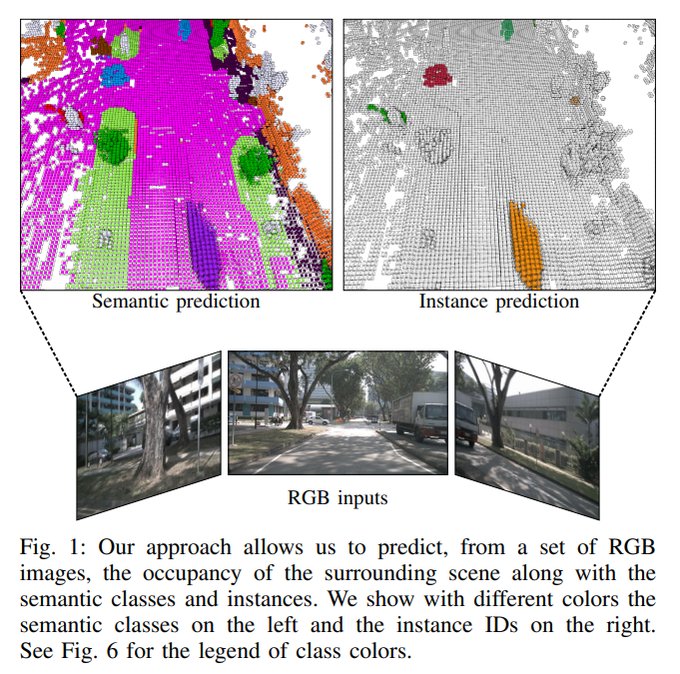

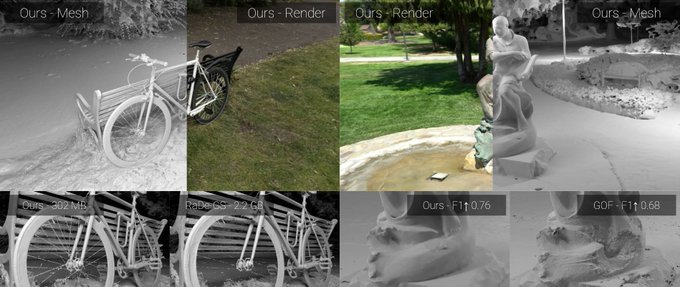

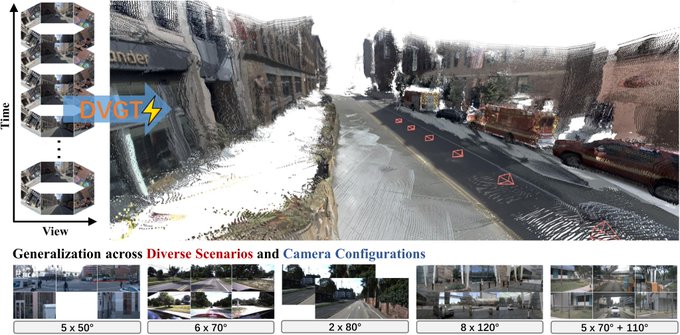

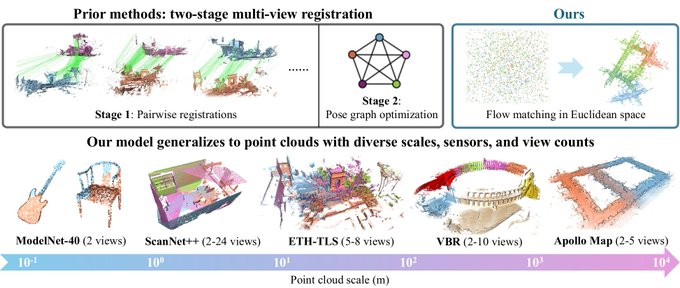

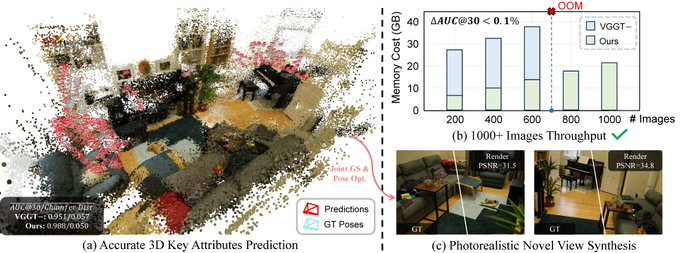

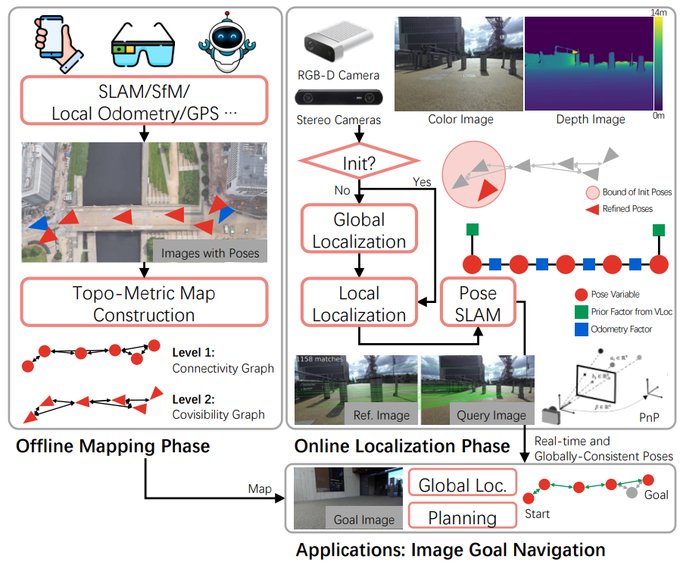

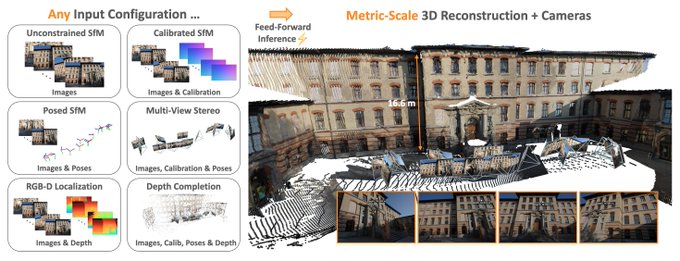

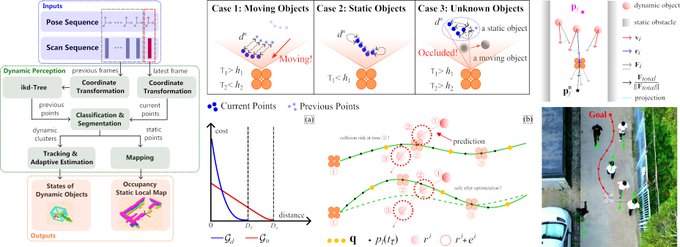

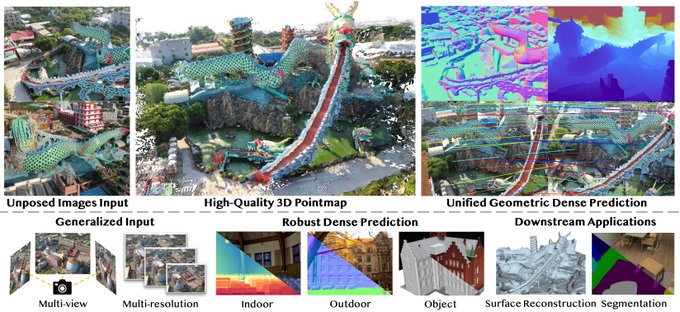

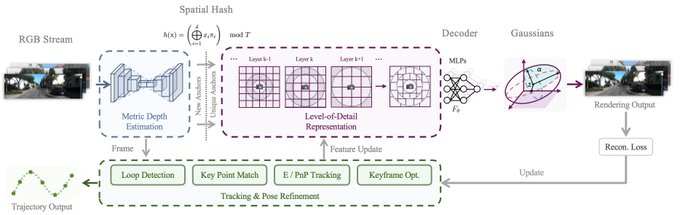

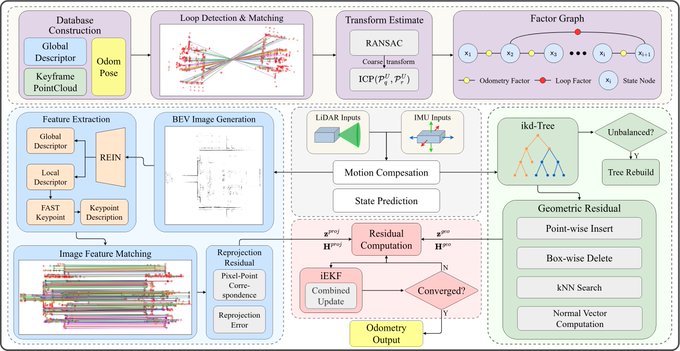

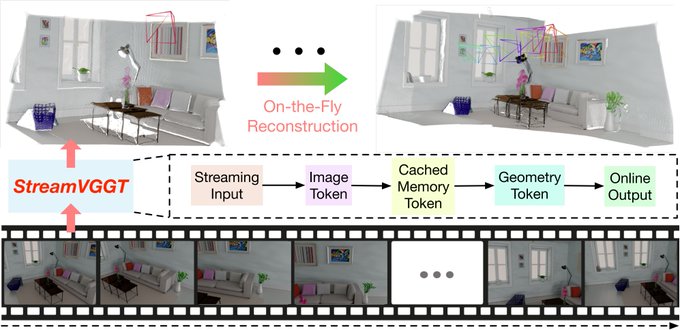

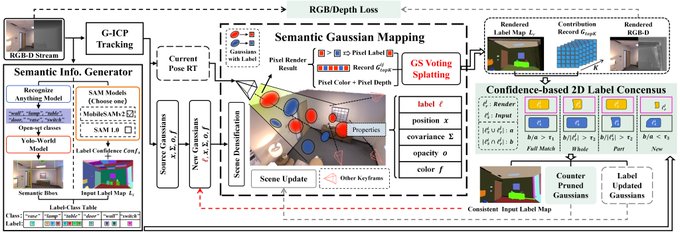

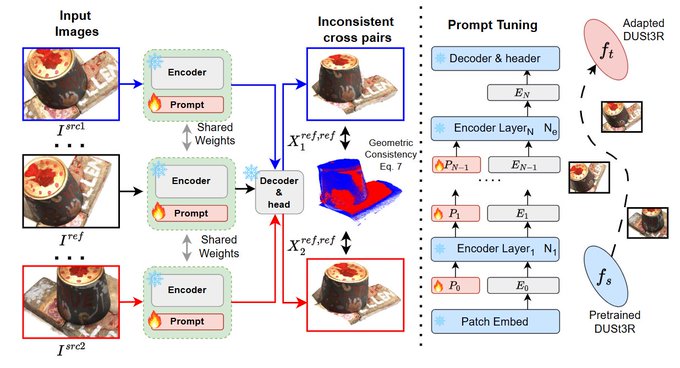

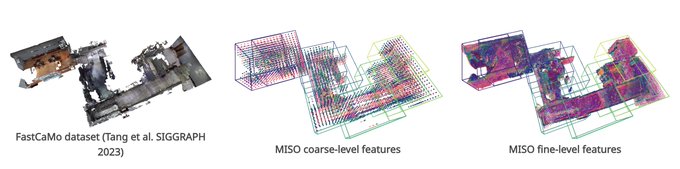

🏗️ 3D再構成・SLAM

11件のポスト

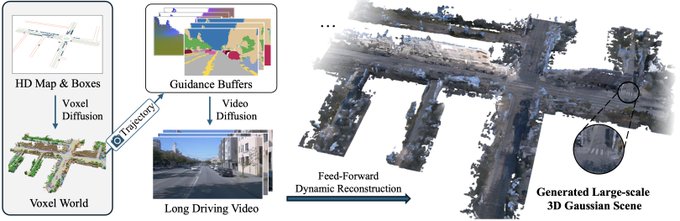

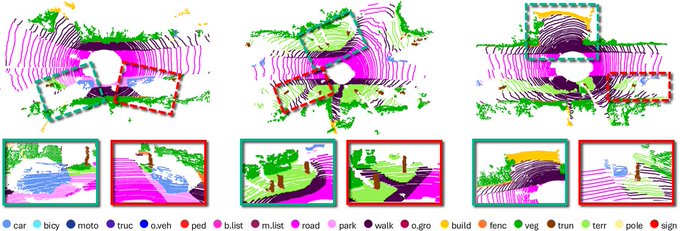

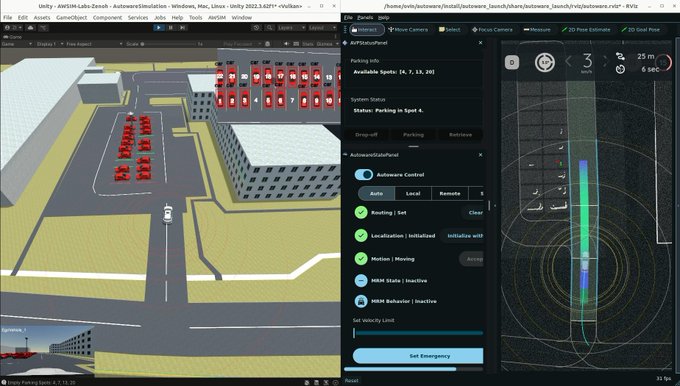

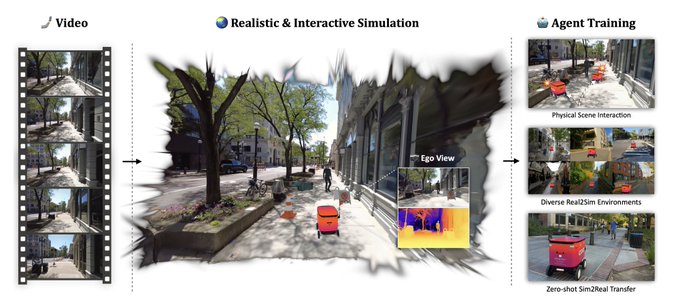

🚗 自動運転

3件のポスト

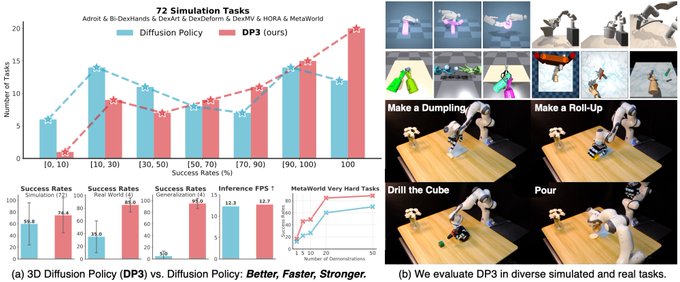

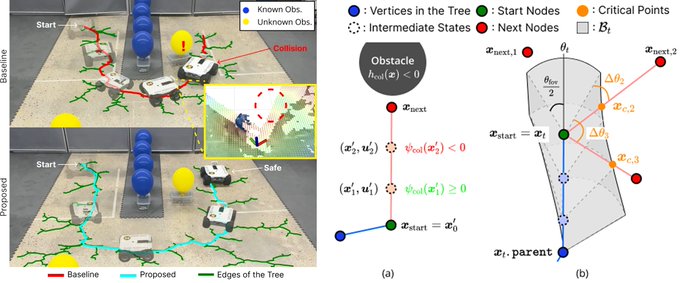

🤖 ロボティクス

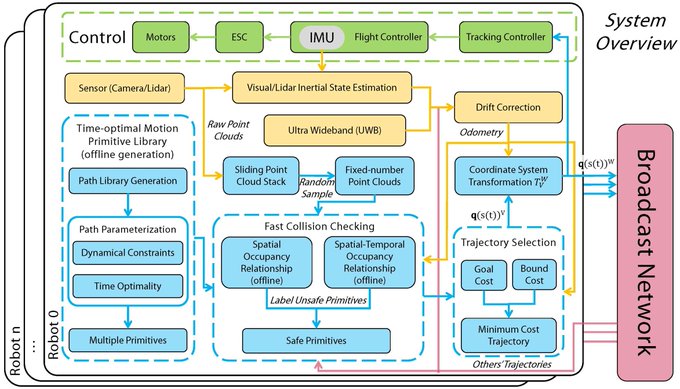

1件のポスト

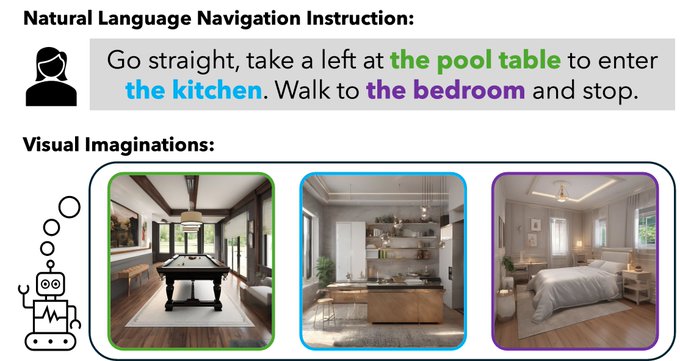

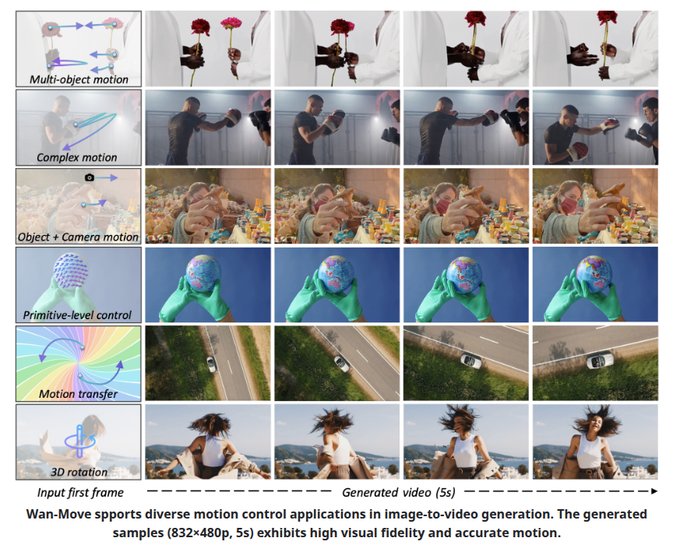

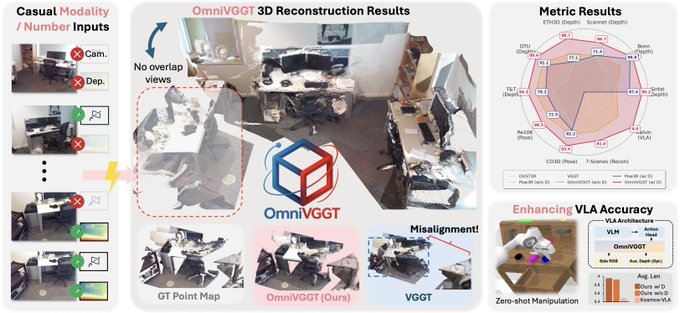

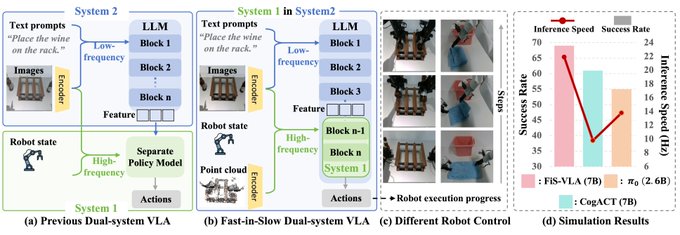

🧠 VLA・Foundation Model

3件のポスト

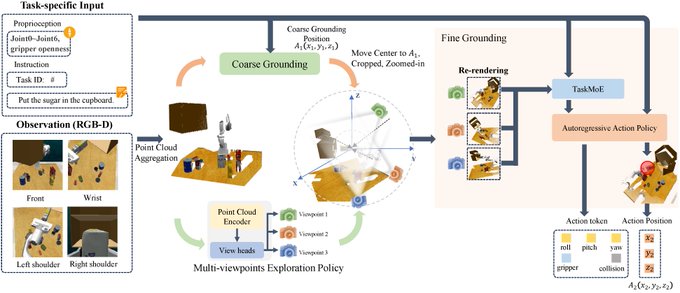

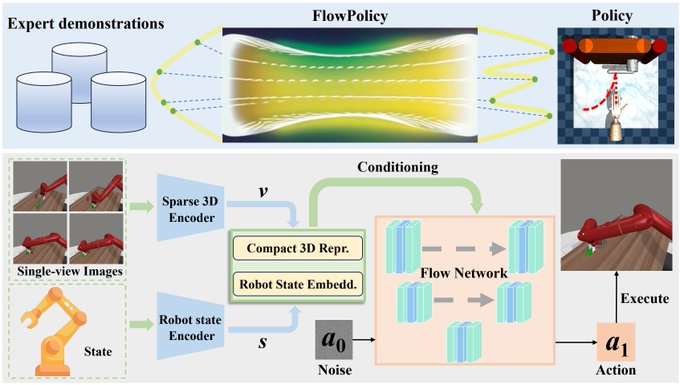

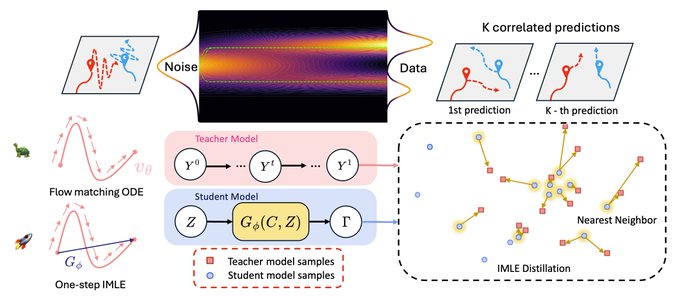

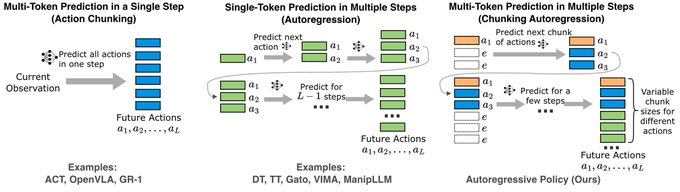

- ACG: Action Coherence Guidance for Flow-based Vision-Language-Action Models (ICRA 2026) Action Coherence Guidance (ACG) is a training-free, test-time … (♥ 120)

- VLAExplain — Interpreting Vision-Language-Action (VLA) Models VLAExplain is an interpretability toolkit designed to help users visually understand the… (♥ 112)

📄 論文紹介

2件のポスト

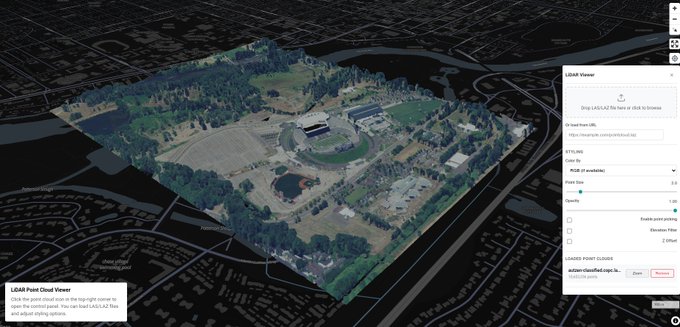

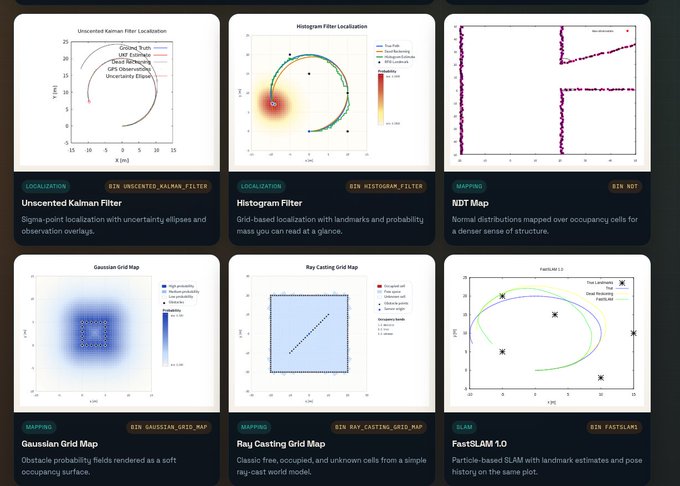

🔧 OSS・ツール

4件のポスト

- 今のロボティクス系ossの新潮流ってなんやろ (♥ 19)

- もうちょい世間のオープンソース活動を推進したくなってきたな (♥ 12)

- もうちょっとoss紹介にひとひねりいれるかぁ (♥ 2)

💬 その他

11件のポスト

- 現代の入社における技術試験ってコーディングテストよりも設計テストの方が良くないと思ったけど、評価が難しいからすぐには導入できないか そこらへんの評価をある程度自動化するAIを作ったら売れそう (♥ 8)

- おおー 👀 三菱電機が50億円出資したAIスタートアップ「燈」CEOを直撃!東大・松尾研発の「隠れユニコーン」の正体とは?創業期から黒字、エンジニアの4割が東大出身、原則フル出社《再配信》 2026年3月3日 (♥ 6)